Cyborg cicadas play Pachelbel’s Canon

Hacker News

MAY 2, 2025

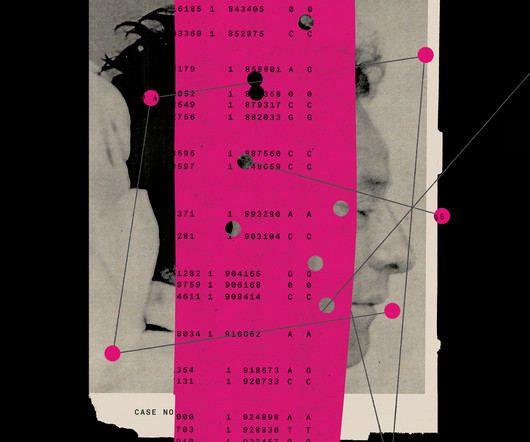

For instance, in 2015, Texas A&M scientists found that implanting electrodes into a cockroach's ganglion (the neuron cluster that controls its front legs) was remarkably effective at successfully steering the roaches 60 percent of the time. The idea was to use them as hybrid robots for search-and-rescue applications.

Let's personalize your content