Data lake

Dataconomy

JULY 7, 2025

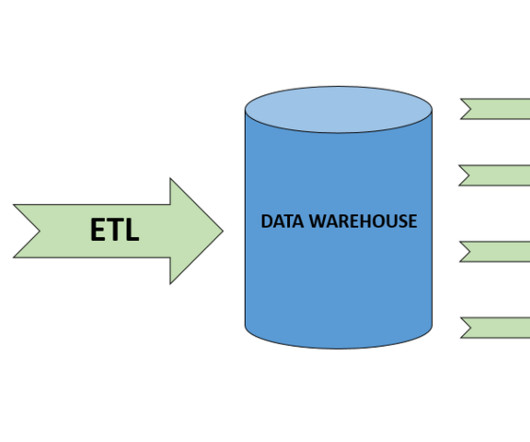

Data lakes have emerged as a pivotal solution for handling the vast volumes of raw data generated in today’s data-driven landscape. Unlike traditional storage solutions, data lakes offer a flexibility that allows organizations to store not just structured data, but also unstructured data that varies in type and format.

Let's personalize your content