Hybrid Vs. Multi-Cloud: 5 Key Comparisons in Kafka Architectures

Smart Data Collective

AUGUST 17, 2022

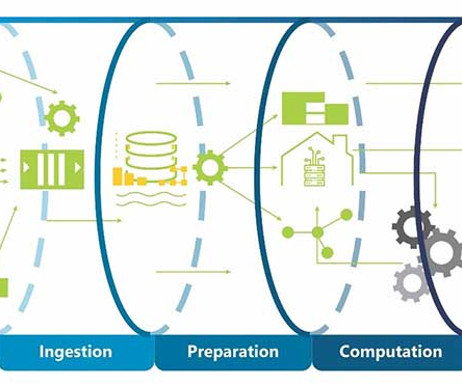

You can safely use an Apache Kafka cluster for seamless data movement from the on-premise hardware solution to the data lake using various cloud services like Amazon’s S3 and others. 5 Key Comparisons in Different Apache Kafka Architectures. Step 2: Create a Data Catalog table.

Let's personalize your content