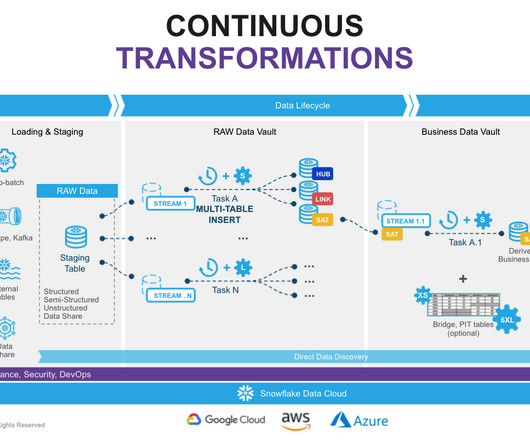

Maximize the Power of dbt and Snowflake to Achieve Efficient and Scalable Data Vault Solutions

phData

AUGUST 10, 2023

The implementation of a data vault architecture requires the integration of multiple technologies to effectively support the design principles and meet the organization’s requirements. Having model-level data validations along with implementing a data observability framework helps to address the data vault’s data quality challenges.

Let's personalize your content