Build a Serverless News Data Pipeline using ML on AWS Cloud

KDnuggets

NOVEMBER 18, 2021

This is the guide on how to build a serverless data pipeline on AWS with a Machine Learning model deployed as a Sagemaker endpoint.

This site uses cookies to improve your experience. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country, we will assume you are from the United States. Select your Cookie Settings or view our Privacy Policy and Terms of Use.

Cookies and similar technologies are used on this website for proper function of the website, for tracking performance analytics and for marketing purposes. We and some of our third-party providers may use cookie data for various purposes. Please review the cookie settings below and choose your preference.

Used for the proper function of the website

Used for monitoring website traffic and interactions

Cookies and similar technologies are used on this website for proper function of the website, for tracking performance analytics and for marketing purposes. We and some of our third-party providers may use cookie data for various purposes. Please review the cookie settings below and choose your preference.

KDnuggets

NOVEMBER 18, 2021

This is the guide on how to build a serverless data pipeline on AWS with a Machine Learning model deployed as a Sagemaker endpoint.

insideBIGDATA

OCTOBER 25, 2023

In this sponsored post, Devika Garg, PhD, Senior Solutions Marketing Manager for Analytics at Pure Storage, believes that in the current era of data-driven transformation, IT leaders must embrace complexity by simplifying their analytics and data footprint.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Analytics Vidhya

SEPTEMBER 3, 2022

Introduction In this article, we will learn about machine learning using Spark. The post Machine learning Pipeline in Pyspark appeared first on Analytics Vidhya. Our previous articles discussed Spark databases, installation, and working of Spark in Python.

Analytics Vidhya

FEBRUARY 6, 2023

Introduction The demand for data to feed machine learning models, data science research, and time-sensitive insights is higher than ever thus, processing the data becomes complex. To make these processes efficient, data pipelines are necessary. appeared first on Analytics Vidhya.

Analytics Vidhya

JUNE 14, 2024

While many ETL tools exist, dbt (data build tool) is emerging as a game-changer. This article dives into the core functionalities of dbt, exploring its unique strengths and how […] The post Transforming Your Data Pipeline with dbt(data build tool) appeared first on Analytics Vidhya.

KDnuggets

NOVEMBER 18, 2021

This is the guide on how to build a serverless data pipeline on AWS with a Machine Learning model deployed as a Sagemaker endpoint.

Analytics Vidhya

JUNE 15, 2022

Introduction ETL is the process that extracts the data from various data sources, transforms the collected data, and loads that data into a common data repository. Azure Data Factory […]. The post Building an ETL Data Pipeline Using Azure Data Factory appeared first on Analytics Vidhya.

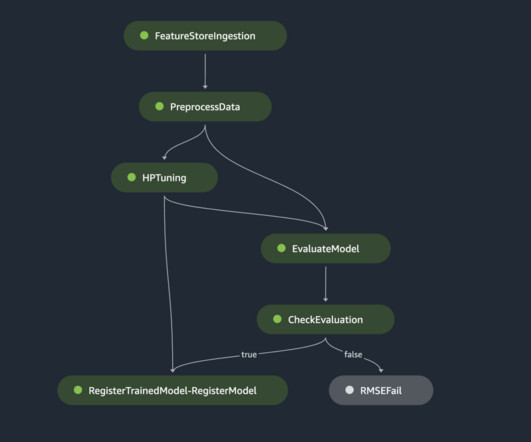

AWS Machine Learning Blog

OCTOBER 24, 2024

Machine learning (ML) helps organizations to increase revenue, drive business growth, and reduce costs by optimizing core business functions such as supply and demand forecasting, customer churn prediction, credit risk scoring, pricing, predicting late shipments, and many others.

IBM Data Science in Practice

MARCH 8, 2023

Feature Platforms — A New Paradigm in Machine Learning Operations (MLOps) Operationalizing Machine Learning is Still Hard OpenAI introduced ChatGPT. The growth of the AI and Machine Learning (ML) industry has continued to grow at a rapid rate over recent years.

Data Science Dojo

JULY 5, 2023

Machine Learning (ML) is a powerful tool that can be used to solve a wide variety of problems. However, building and deploying a machine-learning model is not a simple task. It requires a comprehensive understanding of the end-to-end machine learning lifecycle.

How to Learn Machine Learning

DECEMBER 24, 2024

If you’re diving into the world of machine learning, AWS Machine Learning provides a robust and accessible platform to turn your data science dreams into reality. Introduction Machine learning can seem overwhelming at first – from choosing the right algorithms to setting up infrastructure.

Smart Data Collective

OCTOBER 17, 2022

Data pipelines automatically fetch information from various disparate sources for further consolidation and transformation into high-performing data storage. There are a number of challenges in data storage , which data pipelines can help address. Choosing the right data pipeline solution.

Data Science Dojo

OCTOBER 2, 2023

ChatGPT can also use Wolfram Language to perform more complex tasks, such as simulating physical systems or training machine learning models. Deploy machine learning Models: You can use the plugin to train and deploy machine learning models.

KDnuggets

NOVEMBER 17, 2021

Don’t Waste Time Building Your Data Science Network; 19 Data Science Project Ideas for Beginners; How I Redesigned over 100 ETL into ELT Data Pipelines; Anecdotes from 11 Role Models in Machine Learning; The Ultimate Guide To Different Word Embedding Techniques In NLP.

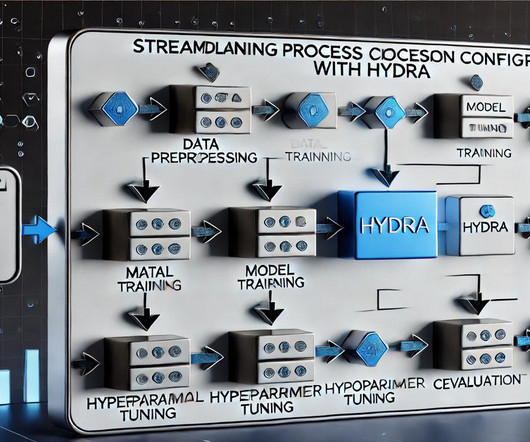

Pickl AI

NOVEMBER 29, 2024

Summary: Hydra simplifies process configuration in Machine Learning by dynamically managing parameters, organising configurations hierarchically, and enabling runtime overrides. As the global Machine Learning market, valued at USD 35.80 These issues can hinder experimentation, reproducibility, and workflow efficiency.

Dataconomy

MAY 6, 2025

Failure analysis machine learning is a critical aspect of ensuring that machine learning models perform reliably in production environments. What is failure analysis machine learning? Importance of production readiness When teams release models, they frequently face a gap between expectations and reality.

NOVEMBER 7, 2023

“Data is at the center of every application, process, and business decision,” wrote Swami Sivasubramanian, VP of Database, Analytics, and Machine Learning at AWS, and I couldn’t agree more. A common pattern customers use today is to build data pipelines to move data from Amazon Aurora to Amazon Redshift.

JUNE 22, 2023

Data engineering startup Prophecy is giving a new turn to data pipeline creation. Known for its low-code SQL tooling, the California-based company today announced data copilot, a generative AI assistant that can create trusted data pipelines from natural language prompts and improve pipeline quality …

KDnuggets

NOVEMBER 17, 2021

Don’t Waste Time Building Your Data Science Network; 19 Data Science Project Ideas for Beginners; How I Redesigned over 100 ETL into ELT Data Pipelines; Anecdotes from 11 Role Models in Machine Learning; The Ultimate Guide To Different Word Embedding Techniques In NLP.

Precisely

DECEMBER 28, 2023

Business success is based on how we use continuously changing data. That’s where streaming data pipelines come into play. This article explores what streaming data pipelines are, how they work, and how to build this data pipeline architecture. What is a streaming data pipeline?

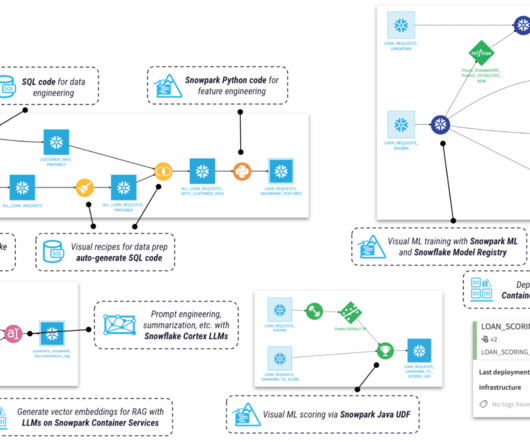

insideBIGDATA

JUNE 4, 2024

Modern data pipeline platform provider Matillion today announced at Snowflake Data Cloud Summit 2024 that it is bringing no-code Generative AI (GenAI) to Snowflake users with new GenAI capabilities and integrations with Snowflake Cortex AI, Snowflake ML Functions, and support for Snowpark Container Services.

IBM Journey to AI blog

MAY 20, 2024

Machine learning (ML) has become a critical component of many organizations’ digital transformation strategy. The answer lies in the data used to train these models and how that data is derived. The answer lies in the data used to train these models and how that data is derived.

Towards AI

OCTOBER 31, 2024

By leveraging GenAI, we can streamline and automate data-cleaning processes: Clean data to use AI? Clean data through GenAI! Three ways to use GenAI for better data Improving data quality can make it easier to apply machine learning and AI to analytics projects and answer business questions.

Dataconomy

JANUARY 24, 2025

A principal data scientist with international experience and former lecturer in Machine Learning, Nataliya has led AI initiatives in the manufacturing, retail, and public sectors. Then I lead data science projectsdesigning models, laying out data pipelines, and making sure everything is tested thoroughly.

AWS Machine Learning Blog

SEPTEMBER 18, 2023

Machine learning (ML) is becoming increasingly complex as customers try to solve more and more challenging problems. This complexity often leads to the need for distributed ML, where multiple machines are used to train a single model. This allows building end-to-end data pipelines and ML workflows on top of Ray.

Data Science Dojo

JULY 6, 2023

Data engineering tools are software applications or frameworks specifically designed to facilitate the process of managing, processing, and transforming large volumes of data. It integrates well with other Google Cloud services and supports advanced analytics and machine learning features.

AWS Machine Learning Blog

DECEMBER 15, 2023

We are excited to announce the launch of Amazon DocumentDB (with MongoDB compatibility) integration with Amazon SageMaker Canvas , allowing Amazon DocumentDB customers to build and use generative AI and machine learning (ML) solutions without writing code. Analyze data using generative AI. Prepare data for machine learning.

AWS Machine Learning Blog

NOVEMBER 26, 2024

Neuron is the SDK used to run deep learning workloads on Trainium and Inferentia based instances. High latency may indicate high user demand or inefficient data pipelines, which can slow down response times. By identifying these signals early, teams can quickly respond in real time to maintain high-quality user experiences.

Data Science Dojo

FEBRUARY 20, 2023

These tools will help you streamline your machine learning workflow, reduce operational overheads, and improve team collaboration and communication. Machine learning (ML) is the technology that automates tasks and provides insights. It allows data scientists to build models that can automate specific tasks.

JANUARY 31, 2025

Observo AI, an artificial intelligence-powered data pipeline company that helps companies solve observability and security issues, said Thursday it has raised $15 million in seed funding led by Felici

Alation

MAY 16, 2023

But with the sheer amount of data continually increasing, how can a business make sense of it? Robust data pipelines. What is a Data Pipeline? A data pipeline is a series of processing steps that move data from its source to its destination. The answer?

JUNE 2, 2025

Using data from 1990 to 2023, we apply a robust data pipeline comprised of six machine learning models and sequential squeeze feature selection incorporating eleven economic, industrial, and energy consumption variables. We have modelled the scenario with an average prediction accuracy of 96.21%.

AWS Machine Learning Blog

DECEMBER 4, 2024

Businesses are under pressure to show return on investment (ROI) from AI use cases, whether predictive machine learning (ML) or generative AI. About the Authors Isaac Cameron is Lead Solutions Architect at Tecton, guiding customers in designing and deploying real-time machine learning applications.

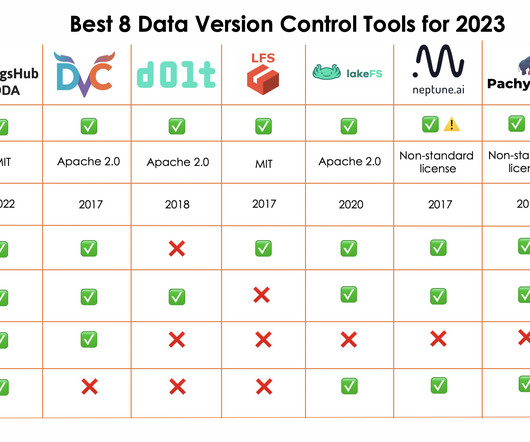

DagsHub

DECEMBER 11, 2023

The following points illustrates some of the main reasons why data versioning is crucial to the success of any data science and machine learning project: Storage space One of the reasons of versioning data is to be able to keep track of multiple versions of the same data which obviously need to be stored as well.

phData

NOVEMBER 4, 2024

Dataiku is an advanced analytics and machine learning platform designed to democratize data science and foster collaboration across technical and non-technical teams. Snowflake excels in efficient data storage and governance, while Dataiku provides the tooling to operationalize advanced analytics and machine learning models.

Dataconomy

MAY 16, 2023

Machine learning engineer vs data scientist: two distinct roles with overlapping expertise, each essential in unlocking the power of data-driven insights. As businesses strive to stay competitive and make data-driven decisions, the roles of machine learning engineers and data scientists have gained prominence.

Dataconomy

APRIL 3, 2025

He spearheads innovations in distributed systems, big-data pipelines, and social media advertising technologies, shaping the future of marketing globally. Here, he was pivotal in building scalable, high-impact systems that leverage big-data processing and machine learning. His work today reflects this vision.

Dataconomy

FEBRUARY 21, 2020

Data professionals across industries recognize they must effectively harness data for their businesses to innovate and gain competitive advantage. High quality, reliable data forms the backbone for all successful data endeavors, from reporting and analytics to machine learning.

Pickl AI

DECEMBER 26, 2024

Summary: “Data Science in a Cloud World” highlights how cloud computing transforms Data Science by providing scalable, cost-effective solutions for big data, Machine Learning, and real-time analytics. This accessibility democratises Data Science, making it available to businesses of all sizes.

Dataconomy

MARCH 20, 2025

TensorFlow has revolutionized the field of machine learning and deep learning since its inception. Developed by Google, this open-source framework allows developers and researchers to efficiently model complex data structures and perform high-level computations.

insideBIGDATA

MARCH 4, 2024

Hammerspace, the company orchestrating the Next Data Cycle, unveiled the high-performance NAS architecture needed to address the requirements of broad-based enterprise AI, machine learning and deep learning (AI/ML/DL) initiatives and the widespread rise of GPU computing both on-premises and in the cloud.

AWS Machine Learning Blog

MARCH 5, 2025

However, traditional machine learning approaches often require extensive data-specific tuning and model customization, resulting in lengthy and resource-heavy development. He builds machine learning pipelines and recommendation systems for product recommendations on the Detail Page.

The MLOps Blog

MAY 17, 2023

Often the Data Team, comprising Data and ML Engineers , needs to build this infrastructure, and this experience can be painful. However, efficient use of ETL pipelines in ML can help make their life much easier. What is an ETL data pipeline in ML? Let’s look at the importance of ETL pipelines in detail.

Dataconomy

MARCH 11, 2025

Financial markets: Continuous trading data and market movements. Event identification and analysis Techniques employed in CEP for event identification include pattern recognition, machine learning, and trend analysis. Apache Kafka: Vital for creating real-time data pipelines and streaming applications.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content