Feature scaling: A way to elevate data potential

Data Science Dojo

FEBRUARY 14, 2024

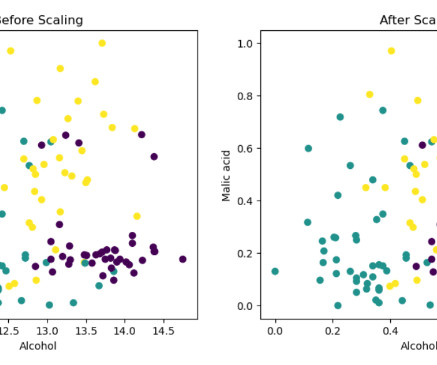

Normalization A feature scaling technique is often applied as part of data preparation for machine learning. The goal of normalization is to change the value of numeric columns in the dataset to use a common scale, without distorting differences in the range of values or losing any information.

Let's personalize your content