Unbundling the Graph in GraphRAG

O'Reilly Media

NOVEMBER 19, 2024

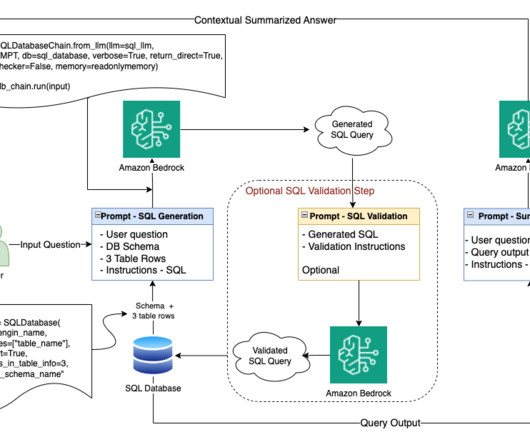

Store these chunks in a vector database, indexed by their embedding vectors. The various flavors of RAG borrow from recommender systems practices, such as the use of vector databases and embeddings. Here’s a simple rough sketch of RAG: Start with a collection of documents about a domain. Split each document into chunks.

Let's personalize your content